Unity

Starting today, some developers can use the popular software Unity to make apps and games for Apple’s upcoming Vision Pro headset.

A partnership between Unity and Apple was first announced during Apple’s WWDC 2023 keynote last month, in the same segment the Vision Pro and visionOS were introduced. At that time, Apple noted that developers could start making visionOS apps immediately using SwiftUI in a new beta version of the company’s Xcode IDE for Macs, but it also promised that Unity would begin supporting Vision Pro this month.

Now it’s here—albeit in a slow, limited rollout to developers that sign up for a beta. Unity says it is admitting a wide range of developers into the program gradually over the coming weeks or months but hasn’t gone into much detail about the criteria it’s using to pick people, other than not solely focusing on makers of AAA games.

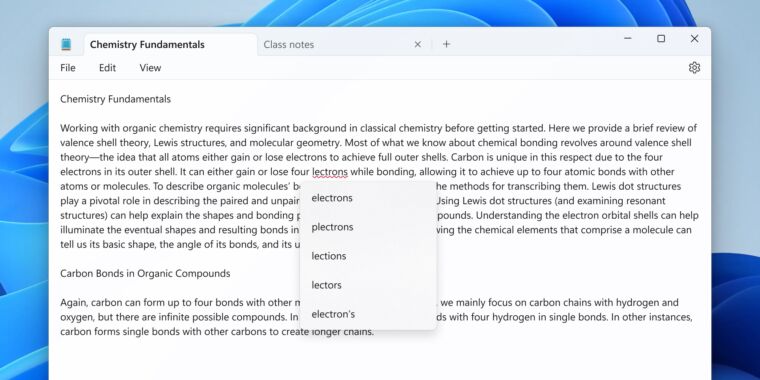

Once developers start working with it, the workflow will be familiar. It closely mirrors how they’ve already worked on iOS. They can create a project targeting the platform, generate an Xcode project from there, and quickly preview or play their work from the Unity editor via either an attached Vision Pro devkit or Xcode’s Simulator for visionOS apps.

Shared spaces, RealityKit, and PolySpatial

Unity is best known as an engine for making 2D and 3D video games, but the company offers a suite of tools that aim to make it a sort of one-stop shop for interactive content development—gaming or otherwise. The company has a long history on Apple’s platforms; many of the early 2D and 3D games on the iPhone were built with Unity, contributing to the company’s rise to fame.

Unity has since also been used to make some popular VR games and apps for PC VR, PlayStation VR and VR2, and Meta Quest platforms.

There are a handful of specific contexts in which a Unity-made app might appear on visionOS. 2D apps running in a flat window within the user’s space will be the easiest to implement. It should also be comparatively straightforward (though not necessarily trivial) to port fully immersive VR apps to the platform—assuming the project in question uses Unity’s Universal Render Pipeline (URP). If it doesn’t, then the app won’t get access to things like foveated rendering, a key feature for both performance and fidelity.

Still, that’s a walk in the park compared to the two other contexts. AR apps that are placed in the user’s visible physical surroundings will be more complicated, and some apps may wish to present interactive 3D objects and spaces alongside other visionOS apps—that is, they want to support multitasking.

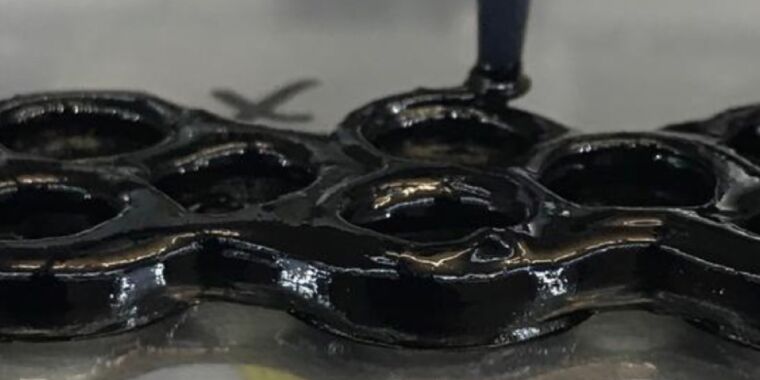

To make that possible, Unity is launching “PolySpatial,” a feature that allows apps to run in visionOS’s Shared Space. Everything in the Shared Space leans on RealityKit, so PolySpatial translates Unity materials, meshes, shaders, and so on to RealityKit. There are some limitations even within that context, so developers will sometimes have to make tweaks, build new shaders, and so on in order to get their apps running on Vision Pro.

It’s worth noting here that purportedly in the name of privacy, visionOS does not give apps direct access to the cameras, and there’s no way to circumvent the need to work with RealityKit.

A lot of the discussion so far has been about adapting existing apps to get their software on Vision Pro in time for the product’s launch next year, but this is also an opportunity for developers to start working on completely new apps for visionOS. Using SwiftUI and other Apple toolkits to make apps and games for visionOS has been possible for about a month now, but Unity has a robust library of tools, plugins, and other resources, particularly for making games, that will cut out a lot of the legwork compared to working in SwiftUI—at least for some projects.