Adobe

On Tuesday, Adobe announced a new symbol designed to indicate when content has been generated or altered using AI tools, reports The Verge, as well as verifying the provenance of non-AI media. The symbol, created in collaboration with other industry players as part of the Coalition for Content Provenance and Authenticity (C2PA), aims to bring transparency to media creation and reduce the impact of misinformation or deepfakes online. Whether it will actually do so in practice is uncertain.

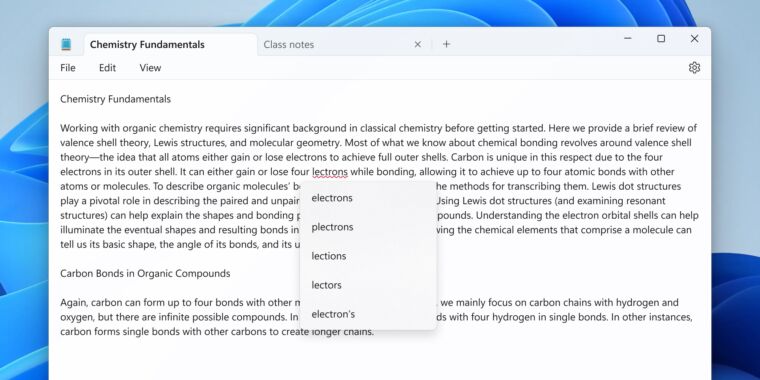

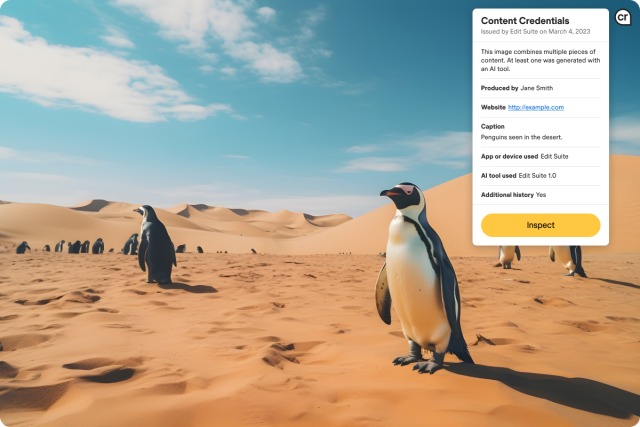

The Content Credentials symbol, which looks like a lowercase “CR” in a curved bubble with a right angle in the lower-right corner, reflects the presence of metadata stored in a PDF, photo, or video file that includes information about the content’s origin and the tools (both AI and conventional) used in its creation. The information is automatically added by supporting digital cameras and AI image generator Adobe Firefly, or it can be inserted by Photoshop and Premiere. It will also soon be supported by Bing Image Creator.

If credentialed media is presented in a compatible app or using a JavaScript wrapper on the web, users click the “CR” icon in the upper-right corner to view a drop-down menu containing image information. Or they can upload a file to a special website to read the metadata.

Adobe’s promotional video for Content Credentials.

Adobe worked alongside organizations such as the BBC, Microsoft, Nikon, and Truepic to develop the Content Credentials system as part of the C2PA, a group aiming to establish technical standards for certifying the source and provenance of digital content. Adobe refers to the “CR” symbol as an “icon of transparency,” and the C2PA chose the initials “CR” in the symbol to stand for “credentials,” avoiding potential confusion with Creative Commons (CC) icons. Notably, the coalition owns the trademark for this new “CR” icon, which Adobe thinks will become as common as the copyright symbol in the future.

Adobe

Adobe likens the content credentials to a “digital nutrition label,” or list of ingredients that make up the piece of media. “This list of ingredients will show verified information as key context so people can be sure of what they’re looking at,” the C2PA writes on its website. “This can include data about a piece of content, such as: the publisher or creator’s information, where and when it was created, what tools were used to make it, including whether or not generative AI was used, as well as any edits that were made along the way.”

Adobe isn’t the only company working on measures to provide ways to track the provenance of AI-generated content. Google has introduced SynthID, a content marker that similarly identifies AI-generated content within the metadata. Additionally, Digimarc has released a digital watermark that includes copyright information, aimed at tracking the use of data in AI training sets, according to The Verge.